Beyond the Freeze-Frame: Awakening Your Digital Memories

You have thousands of them. They sit in the cloud, gathering digital dust—snapshots of birthdays, sunsets, quiet moments with pets, and chaotic holiday dinners. We are a generation obsessed with capturing the “now,” yet we often leave these memories frozen in time.

Here is the friction point: A photograph is a pause button, but life is a continuous stream.

When you look back at a photo of a beach trip from five years ago, you see the water, but you don’t hear the crash of the waves. You see the smile, but you miss the laughter that followed. The emotional disconnect grows with every passing year. We crave immersion, but we are stuck with static pixels.

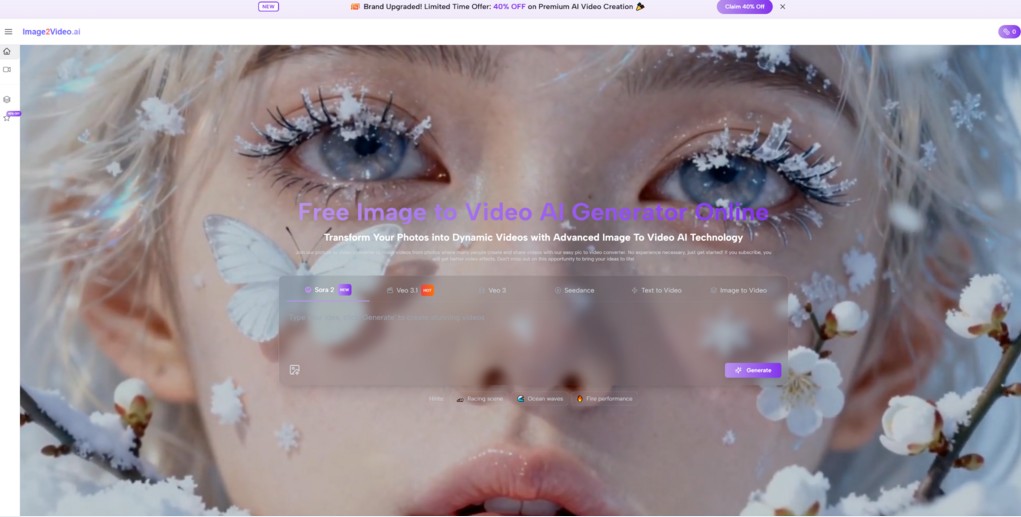

For a long time, bridging this gap required expensive software, hours of keyframing, and professional animation skills. But the landscape has shifted. We are entering an era where artificial intelligence doesn’t just edit our past; it reanimates it. This is where the technology behind platforms like Image to Video AI enters the conversation, offering a glimpse into a future where our albums are no longer silent.

The shift from “Capturing” to “Creating”

My Personal Experiment with Motion

I recently decided to test the capabilities of modern generative video. I wasn’t looking for Hollywood CGI; I wanted to see if an AI could understand the physics of a memory.

I uploaded a faded, scanned image of my grandfather standing in a garden. The original photo was static, grainy, and over thirty years old.

In my testing, the results were surprisingly grounded. The AI didn’t just warp the image; it seemed to calculate the wind direction. The leaves in the background began to sway gently, and there was a subtle shift in his posture—a natural “breathing” motion that felt eerily human.

It wasn’t perfect—I’ll touch on the limitations later—but the emotional impact was undeniable. It transformed a relic into a moment.

The Narrative Engine: How It Works

Think of this technology not as a camera, but as a dream weaver.

When you provide a static image to an AI, it doesn’t just “see” colors and shapes. It analyzes context. It recognizes that “water” should ripple, “fire” should flicker, and “clouds” should drift. It predicts the next few seconds of existence for that subject based on millions of video patterns it has studied.

It’s the difference between a painter and a choreographer. The painter gives you the pose; the choreographer gives you the dance.

Analyzing the Realism: A Look at the Physics

One of the most debated topics in AI video generation is the “Uncanny Valley”—that uneasy feeling when something looks human but acts robotic.

From what I have observed using tools like image2video.ai, the gap is closing, particularly in environmental physics.

Fluid Dynamics and Lighting

In my tests, natural elements like water and smoke are handled with impressive accuracy. The reflection of light on moving water tends to maintain consistency, which is historically difficult for animation software to achieve without manual rendering.

Human Movement

This is where the “generative” aspect shines. The AI attempts to simulate muscle movement. If you use features like the “Hug Video” or “Dance Video” options, the model isn’t just pasting a GIF over a photo; it is restructuring the anatomy of the subject to fit the motion.

Note: While the technology is advancing rapidly with models like Sora 2 and Veo 3 referenced in the industry, it is fascinating to see how accessible web-based interfaces are integrating these complex calculations.

Visualizing the Upgrade: Static vs. Kinetic Storytelling

To understand the value proposition of turning images into video, it helps to look at the functional differences. This isn’t just about aesthetics; it’s about engagement.

| Feature | Static Photography | Traditional Animation | AI Video Generation |

| Time Investment | Instant capture | Hours/Days of editing | Minutes of processing |

| Skill Requirement | Photography basics | High technical skill | Natural language prompting |

| Emotional Depth | Captures a split second | Scripted movement | Reimagined reality |

| Cost | Low | High | Variable / Freemium |

| Best Use Case | Archiving / Printing | Commercial Ads | Social Media / Memory Revival |

The “Before and After” Bridge

- Before: You have a product shot of a coffee cup on a table. It’s clean, professional, but lifeless. It relies entirely on the viewer’s imagination to smell the aroma.

- After: By applying an AI motion filter, steam rises gently from the cup. The morning light shifts across the table. The viewer is no longer just looking at a product; they are witnessing a *morning routine*.

Navigating the Limitations: A Reality Check

It is crucial to approach this technology with managed expectations. While the promotional reels often look like magic, the reality of working with generative AI is more nuanced.

- The “Dice Roll” of Quality

In my experience, you don’t always get the perfect shot on the first try. Sometimes the AI might misinterpret a hand gesture, resulting in extra fingers or a warping effect. This is common across all current video generation models, not just specific tools.

- Consistency vs. Creativity

If you are looking for precise, frame-by-frame control (e.g., “move the arm exactly 30 degrees left”), AI might frustrate you. These tools operate on *probability*, not rigid instruction. You are directing a vibe, not a geometry lesson.

- Processing Time

Realism takes computing power. High-quality renders are not instant. As noted on many platforms, generating a few seconds of high-definition video can take several minutes. Patience is a necessary part of the workflow.

Practical Applications: Who Is This For?

You don’t need to be a filmmaker to find value here. The utility of image-to-video technology spans several archetypes:

1. The Memory Keeper

For those holding onto scanned Polaroids of ancestors. Using tools to animate old photos can be a profound way to reconnect with family history. Seeing a great-grandparent “smile” again is a unique emotional experience.

2. The Content Creator

Social media algorithms heavily favor video content over static images. For creators, turning a travel photo into a 4-second looping ambient video can significantly increase dwell time (the amount of time a user stops scrolling).

3. The E-commerce Brand

As mentioned in the coffee cup analogy, movement catches the eye. A subtle animation on a product listing can differentiate a brand in a crowded marketplace.

How to Start Your Journey

If you are curious to try this yourself, the barrier to entry is lower than ever. You generally don’t need high-end graphics cards or complex software installations.

- Select a High-Contrast Image: AI works best when the subject is clearly separated from the background.

- Craft a Simple Prompt: If the tool allows text input, be descriptive but concise. Instead of “make it move,” try “camera pans slowly to the right, leaves blowing in the wind.”

- Iterate: Treat the first result as a draft. Tweak your settings or try a different source image if the physics feel “off.”

The Future of Digital Expression

We are standing on the precipice of a new medium. Just as photography replaced painting for documenting reality, AI video is poised to augment photography.

It is not about replacing the photograph—there will always be beauty in stillness. It is about unlocking the potential energy stored within that stillness. Whether you are a marketer looking to stop the scroll, or a grandchild looking to see a loved one move one last time, the tools are now in your hands.

The technology is still maturing, and it requires a human touch to guide it. But when it works, it feels less like computing and more like remembering.